Yesterday, I wrote about How to do cross-domain GWT RPC with a ProxyServlet. Today I'll be discussing

how to modify the ProxyServlet to authenticate with Spring Security. For the application I'm working on, the ProxyServlet

is only used in development (when running GWT's hosted mode) and isn't necessary when deploying the client and

server on the same server. Using the ProxyServlet allows cross-domain requests so you can run GWT in hosted mode and

talk to your backend running on another server. This setup can be especially handy in that you

can easily point your hosted client at different backends (for example, if you have testing and staging environments).

In this example, the backend application is a JSF/Spring application that has Spring Security wired in to protect

services with both Basic and Form-based authentication. Basic authentication will kick in if a "Authorization" header

is sent, otherwise Form-based authentication is used. Here's the Spring Security context file that makes this happen:

<?xml version="1.0" encoding="UTF-8"?>

<beans:beans xmlns="http://www.springframework.org/schema/security"

xmlns:beans="http://www.springframework.org/schema/beans"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="...">

<http auto-config="true" realm="My Web Application">

<intercept-url pattern="/faces/welcome.jspx" access="ROLE_USER"/>

<intercept-url pattern="/*.rpc" access="ROLE_USER"/>

<http-basic/>

<form-login login-page="/faces/login.jspx" authentication-failure-url="/faces/accessDenied.jspx"

login-processing-url="/j_spring_security_check" default-target-url="/redirect.jsp"

always-use-default-target="true"/>

</http>

<authentication-provider>

<user-service >

<user name="admin" password="admin" authorities="ROLE_USER"/>

</user-service>

</authentication-provider>

</beans:beans>

The easiest way to configure your GWT application to talk to a Spring Security protected resource is to

protect your HTML page that GWT is embedded in. This is the documented way to integrate GWT with Spring Security (ref:

GWT's LoginSecurityFAQ, search for "Acegi").

This works well for production, but not for hosted-mode development.

Basic Authentication

To authenticate with Basic Authentication, you can use GWT's RequestBuilder and set an "Authentication" header that

contains the user's (base64-encoded) credentials.

private class LoginRequest {

public LoginRequest(RequestCallback callback) {

String url = "/services/faces/welcome.jspx";

RequestBuilder rb = new RequestBuilder(RequestBuilder.POST, url);

rb.setHeader("Authorization", createBasicAuthToken());

rb.setCallback(callback);

try {

rb.send();

} catch (RequestException e) {

Window.alert(e.getMessage());

}

}

}

protected String createBasicAuthToken() {

byte[] bytes = stringToBytes(username.getValue() + ":" + password.getValue());

String token = Base64.encode(bytes);

return "Basic " + token;

}

protected byte[] stringToBytes(String msg) {

int len = msg.length();

byte[] bytes = new byte[len];

for (int i = 0; i < len; i++)

bytes[i] = (byte) (msg.charAt(i) & 0xff);

return bytes;

}

To use this LoginRequest class, create it with a callback and look for a 401 response code to determine if

authentication failed.

new LoginRequest(new RequestCallback() {

public void onResponseReceived(Request request, Response response) {

if (response.getStatusCode() != Response.SC_UNAUTHORIZED &&

response.getStatusCode() != Response.SC_OK) {

onError(request, new RequestException(response.getStatusText() + ":\n" + response.getText()));

return;

}

if (response.getStatusCode() == Response.SC_UNAUTHORIZED) {

Window.alert("You have entered an incorrect username or password. Please try again.");

} else {

// authentication worked, show a fancy dashboard screen

}

}

public void onError(Request request, Throwable throwable) {

Window.alert(throwable.getMessage());

}

});

If your GWT application is included in the "services" war, everything should work at this point. However, if you try to login

with invalid credentials, your browser's login dialog will appear. To suppress this in the aforementioned

ProxyServlet, you'll need to make a change in its executeProxyRequest() method so the "WWW-Authenticate" header

is not copied.

// Pass the response code back to the client

httpServletResponse.setStatus(intProxyResponseCode);

// Pass response headers back to the client

Header[] headerArrayResponse = httpMethodProxyRequest.getResponseHeaders();

for (Header header : headerArrayResponse) {

if (header.getName().equals("Transfer-Encoding") && header.getValue().equals("chunked") ||

header.getName().equals("Content-Encoding") && header.getValue().equals("gzip") ||

header.getName().equals("WWW-Authenticate")) { // don't copy WWW-Authenticate header

} else {

httpServletResponse.setHeader(header.getName(), header.getValue());

}

}

I'm not sure how to suppress the browser prompt when not using the ProxyServlet. If you have a solution, please

let me know.

Basic Authentication works well for GWT applications because you don't need additional logic to retain the

authenticated state after the initial login. While Basic Authentication over SSL might offer a decent solution,

the downside is you can't logout. Form-based Authentication allows you to logout.

Form-based Authentication

Before I show you how to implement form-based authentication, you should be aware that Google does not recommend this.

Below is a warning from their LoginSecurityFAQ.

Do NOT attempt to use the Cookie header to transfer the sessionID from GWT to the server; it is

fraught with security issues that will become clear in the rest of this article. You MUST transfer

the sessionID in the payload of the request. For an example of why this can fail, see CrossSiteRequestForgery.

In my experiment, I didn't want to change the server-side Spring Security configuration, so I ignored this

warning. If you know how to configure Spring Security so it looks for the sessionID in the payload of the request

(rather than in a cookie), I'd love to hear about it. The upside of the example below is it should work with

container-managed authentication as well.

The LoginRequest class for form-based authentication is similar to the previous one, except it has a different URL and

sends the user's credentials in the request body.

private class LoginRequest {

public LoginRequest(RequestCallback callback) {

String url = "/services/j_spring_security_check";

RequestBuilder rb = new RequestBuilder(RequestBuilder.POST, url);

rb.setHeader("Content-Type", "application/x-www-form-urlencoded");

rb.setRequestData("j_username=" + URL.encode(username.getValue()) +

"&j_password=" + URL.encode(password.getValue()));

rb.setCallback(callback);

try {

rb.send();

} catch (RequestException e) {

Window.alert(e.getMessage());

}

}

}

If you deploy your GWT application in the same WAR your services are hosted in, this is all you'll need to do. If

you're using the ProxyServlet, there's a couple of changes you'll need to make in order to set/send cookies when

running in hosted mode.

First of all, you'll need to make sure you've configured the servlet to follow redirects (by subclassing or simply modifying its default).

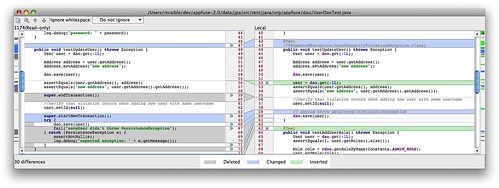

After that, add the following logic on line 358 (or just look for "if (followRedirects)") to expose the sessionID to the client. The most important part is setting the cookie's path to "/" so the client (running at localhost:8888) can see it.

if (followRedirects) {

// happens on first login attempt

if (stringLocation.contains("jsessionid")) {

Cookie cookie = new Cookie("JSESSIONID",

stringLocation.substring(stringLocation.indexOf("jsessionid=") + 11));

cookie.setPath("/");

httpServletResponse.addCookie(cookie);

// the following happens if you refresh your GWT app after already logging in once

} else if (httpMethodProxyRequest.getResponseHeader("Set-Cookie") != null) {

Header header = httpMethodProxyRequest.getResponseHeader("Set-Cookie");

String[] cookieDetails = header.getValue().split(";");

String[] nameValue = cookieDetails[0].split("=");

Cookie cookie = new Cookie(nameValue[0], nameValue[1]);

cookie.setPath("/");

httpServletResponse.addCookie(cookie);

}

httpServletResponse.sendRedirect(stringLocation.replace(getProxyHostAndPort() +

this.getProxyPath(), stringMyHostName));

return;

}

Click here to see a screenshot of the diff of the ProxyServlet after this code has been added.

Figuring out that headers needed to be parsed after authenticating successfully and before redirecting was the hardest part for me. If you grab the JSESSIONID from

the "Set-Cookie" header anywhere else, the JSESSIONID is one that hasn't been authenticated. While the login will work,

subsequent calls to services will fail.

To make subsequent calls with the cookie in the header, you'll need to make an additional modification to ProxyServlet to

send cookies as headers. First of all, add a setProxyRequestCookies() method:

/**

* Retrieves all of the cookies from the servlet request and sets them on

* the proxy request

*

* @param httpServletRequest The request object representing the client's

* request to the servlet engine

* @param httpMethodProxyRequest The request that we are about to send to

* the proxy host

*/

@SuppressWarnings("unchecked")

private void setProxyRequestCookies(HttpServletRequest httpServletRequest,

HttpMethod httpMethodProxyRequest) {

// Get an array of all of all the cookies sent by the client

Cookie[] cookies = httpServletRequest.getCookies();

if (cookies == null) {

return;

}

for (Cookie cookie : cookies) {

cookie.setDomain(stringProxyHost);

cookie.setPath(httpServletRequest.getServletPath());

httpMethodProxyRequest.setRequestHeader("Cookie", cookie.getName() +

"=" + cookie.getValue() + "; Path=" + cookie.getPath());

}

}

Next, in the doGet() and doPost() methods, add the following line just after the call to setProxyRequestHeaders().

setProxyRequestCookies(httpServletRequest, getMethodProxyRequest);

After making these modifications to ProxyServlet, you can create LoginRequest and attempt to authenticate. To detect a failed attempt, I'm looking for text in Spring Security's "authentication-failure-url" page.

new LoginRequest(new RequestCallback() {

public void onResponseReceived(Request request, Response response) {

if (response.getStatusCode() != Response.SC_OK) {

onError(request, new RequestException(response.getStatusText() + ":\n" + response.getText()));

return;

}

if (response.getText().contains("Access Denied")) {

Window.alert("You have entered an incorrect username or password. Please try again.");

} else {

// authentication worked, show a fancy dashboard screen

}

}

public void onError(Request request, Throwable throwable) {

Window.alert(throwable.getMessage());

}

});

After making these changes, you should be able to authenticate with Spring Security's form-based configuration. While this example doesn't show how to logout, it should be easy enough to do by 1) deleting the JSESSIONID cookie or 2) calling the Logout URL you have configured in your services WAR.

Hopefully this howto gives you enough information to configure your GWT application to talk to Spring Security

without modifying your existing backend application. It's entirely possible that Spring Security offers a more GWT-friendly

authentication mechanism. If you know of a better way to integrate GWT with Spring Security, I'd love to hear about it.

Update on October 7, 2009: I did some additional work on this and got Remember Me working when using form-based authentication. I found I didn't need as much fancy logic in my ProxyServlet and was able to reduce the "followRequests" logic to the following:

if (followRedirects) {

if (httpMethodProxyRequest.getResponseHeader("Set-Cookie") != null) {

Header[] headers = httpMethodProxyRequest.getResponseHeaders("Set-Cookie");

if (headers.length == 1) {

extractCookieFromHeader(httpServletResponse, headers[0]);

} else {

// ignore the first header since there always seems two jsessionid headers

// and the 2nd is the valid one

for (int i = 1; i < headers.length; i++) {

extractCookieFromHeader(httpServletResponse, headers[i]);

}

}

}

httpServletResponse.sendRedirect(

stringLocation.replace(getProxyHostAndPort() + getProxyPath(), stringMyHostName));

return;

}

I was also able to remove the setProxyRequestCookies() method completely as it no longer seems necessary.

Next, I'd like to figure out how to make Spring Security more Ajax-friendly where it can read an authentication token in the request body or header (instead of from a cookie). Also, it'd be sweet if I could convince it to return error codes instead of the login page (for example, when a certain header is present).