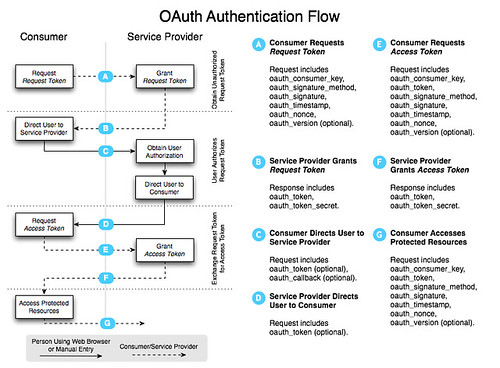

I've heard about OAuth for quite some time, but never had an opportunity to implement it on a project. For a good explanation of what OAuth is, see its Introduction. Here's an excerpt:

...it allows you the User to grant access to your private resources on one site (which is called the Service Provider), to another site (called Consumer, not to be confused with you, the User). While OpenID is all about using a single identity to sign into many sites, OAuth is about giving access to your stuff without sharing your identity at all (or its secret parts).

The reason I needed OAuth was to interact with the Google Contacts API. I've always hated how sites make you import all your contacts from Gmail. I wanted to develop a system that'd let you simply read your contacts from Google in real-time.

Since the application I'm working on uses GWT, I chose to implement an OAuth client in GWT. After googling for "gwt oauth", I found two examples. Unfortunately, neither worked out-of-the-box.

The good news is I did manage to create a working solution. The bad news is it only seems to work at random. That's right folks, I created a solution that only works 50% of the time. I'm somewhat embarrassed to post it here, but I also realize the power of open source and community. By sharing, I hope we can find the flaws in my logic and come up with a solution for all GWT applications.

The best project for OAuth libraries seems to be oauth on Google Code. However, you'll notice that there is no JavaScript implementation listed on the homepage. I did look at the Java implementation, but quickly realized it wouldn't be usable in GWT. Therefore, I opted for the JavaScript implementation.

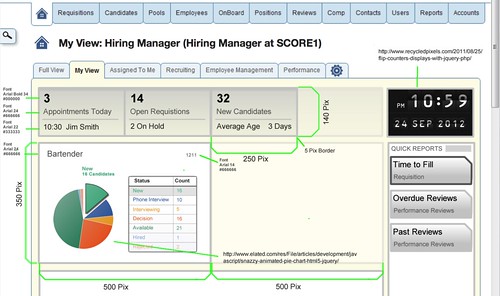

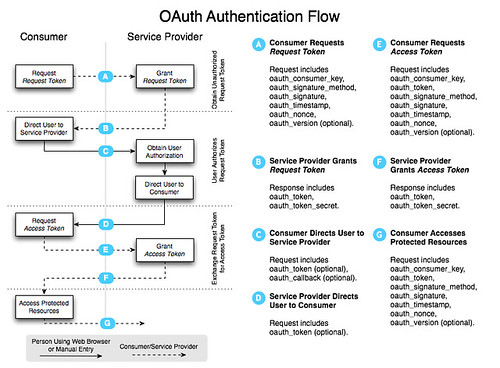

OAuth consists of several steps. The following diagram explains the authentication flow nicely.

In a nutshell, you have to complete the following steps:

- Get a token from the service provider.

- Redirect user to service provider to grant access and redirect back to application.

- Request access token to access protected resources.

- Access protected resources and pull/push data.

To access a service provider's OAuth service, you'll likely need to start by registering your application. For Google, OAuth Authentication for Web Applications is an excellent resource. Google's OAuth Playground is a great way to with the Google Data APIs after you've registered.

Now that you know how OAuth works, let's look at how I implemented it with GWT. I started by adding the necessary JavaScript references to my *.gwt.xml file.

<script src="//oauth.googlecode.com/svn/code/javascript/oauth.js"/>

<script src="//oauth.googlecode.com/svn/code/javascript/sha1.js"/>

Next, I needed a way to sign the request. I tried to use Sergi Mansilla's OAuth.java for this, but discovered issues with how the parameters were being written with GWT 1.6. I opted for Paul Donnelly's makeSignedRequest function instead. By adding this to my application's HTML page, I'm able to call it using the following JSNI method:

public native static String signRequest(String key, String secret, String tokenSecret, String url) /*-{

return $wnd.makeSignedRequest(key, secret, tokenSecret, url);

}-*/;

After the URL is signed, it needs to be sent to the provider to get a request token. To do this, I used GWT's RequestBuilder and created a send() method:

protected void send(RequestCallback cb, String URL) {

RequestBuilder builder = new RequestBuilder(RequestBuilder.GET, URL);

builder.setTimeoutMillis(10000);

builder.setCallback(cb);

Request req = null;

try {

req = builder.send();

} catch (RequestException e) {

cb.onError(req, e);

}

}

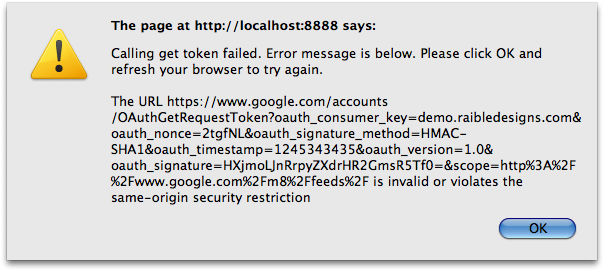

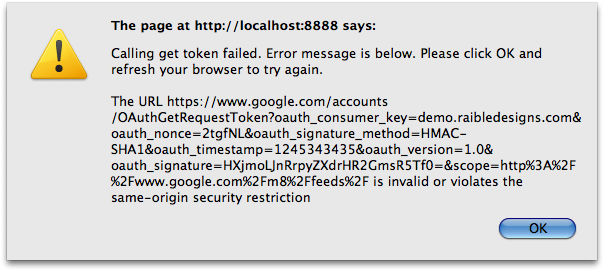

If you try this with Google's Request Token URL in GWT's hosted mode, nothing will happen. Compile/browse to Safari and you'll still see nothing. Try it in Firefox and you'll see the following.

To workaround browsers' Same Origin Policy, I added a proxy servlet to send the requests. I started with Jason Edwards's ProxyServlet and modified it to fit my needs. I then registered it in both *.gwt.xml and web.xml.

<servlet path="/google/" class="org.appfuse.gwt.servlet.AlternateHostProxyServlet"/>

Now, before calling the send() method, I replace the start of the URL so the request would be routed through the servlet.

public void getToken(RequestCallback cb) {

String url = signRequest(provider.getConsumerKey(),

provider.getConsumerSecret(),

"", provider.getRequestTokenURL());

url = url.replace("https://www.google.com/", "/google/");

send(cb, url);

}

When the request returns, I create two cookies by calling a createOAuthCookies() method with the payload returned:

public static String[] createOAuthCookies(String data) {

String oauth_token = data.substring(data.indexOf("oauth_token=") + 12);

oauth_token = oauth_token.substring(0, oauth_token.indexOf("&"));

String oauth_token_secret = data.substring(data.indexOf("oauth_token_secret=") + 19);

Cookies.setCookie("oauth_token", URL.decode(oauth_token));

Cookies.setCookie("oauth_token_secret", URL.decode(oauth_token_secret));

return new String[]{oauth_token, oauth_token_secret};

}

The next step is to authorize the token. This is where things got tricky with my proxy servlet and I had to add some special logic for GWT. Google was sending back a 302 with a Location header, but it wasn't hitting the onResponseReceived() method in my callback. For this reason, I had to change it to a 200 status code and add the redirect location to the body. I also discovered that sometimes they'd return an HTML page with a <meta http-equiv="refresh" ...> tag. When using Twitter, I discovered the full HTML for the allow/deny page was returned.

Below is the callback I'm using. WindowUtils is a class I got from Robert Hanson and the gwt-widget project.

public void onResponseReceived(Request request, Response response) {

String text = response.getText();

if (response.getStatusCode() == 200 && response.getText().startsWith("http")) {

WindowUtils.changeLocation(response.getText());

} else {

// look for meta-tag that refreshes and grab its URL

if (text.contains("";

String url = text.substring(text.indexOf(tokenToStartWith) + tokenToStartWith.length());

url = url.substring(0, url.indexOf(tokenToEndWith) + tokenToEndWith.length());

WindowUtils.changeLocation(url);

} else {

// Twitter returns a full HTML page, so redirect to the authorize URL manually

if (provider instanceof Twitter) {

String url = provider.getAuthorizeTokenURL();

url = url.replace("$1", OAuthRequest.getAuthToken());

url = url.replace("$2", DefaultRequest.getCurrentLocation());

WindowUtils.changeLocation(url);

} else {

onError(request, new RequestException(text));

}

}

}

}

public void onError(Request request, Throwable caught) {

Window.alert("Calling authorize token failed. " + OAuthPage.STANDARD_ERROR + "\n\n" + caught.getMessage());

}

The 3rd step is to get an access token. The most important thing to remember when you do this is to include the "oauth_token_secret" value when signing the request.

signRequest(provider.getConsumerKey(), provider.getConsumerSecret(),

getAuthTokenSecret(), url);

After this completes with a 200, I create the cookies again (since oauth_token and oauth_token_secret are returned in the body), then call the API to get a list of contacts. The ContactsRequests class is responsible for making the call. The DefaultRequest class contains the send() method as well as utility methods to get the cookie values of the oauth tokens.

public class ContactsRequest extends DefaultRequest {

private static final String GOOGLE_CONTACTS_URL =

"http://www.google.com/m8/feeds/contacts/default/thin?oauth_token=$1";

private OAuthProvider provider;

public ContactsRequest(OAuthProvider provider) {

this.provider = provider;

}

public void getContacts(RequestCallback cb) {

String url = GOOGLE_CONTACTS_URL.replace("$1", getAuthToken());

url = signRequest(provider.getConsumerKey(), provider.getConsumerSecret(),

getAuthTokenSecret(), url);

String proxiedURLPrefix = "/contacts/";

// allow for deploying at /gwt-oauth context

if (WindowUtils.getLocation().getPath().contains("gwt-oauth")) {

proxiedURLPrefix = "/gwt-oauth" + proxiedURLPrefix;

}

url = url.replace("http://www.google.com/", proxiedURLPrefix);

send(cb, url);

}

}

If all goes well, the response contains the data you requested and it's used to populate a textarea (at least in this demo application). Of course, additional processing needs to occur to parse/format this data into something useful.

This all sounds pretty useful for GWT applications, right? I believe it does - but only if it works consistently. I sent a message to the OAuth Google Group explaining the issues I've had.

I'm trying to use the JavaScript API to authenticate with OAuth from a

GWT application. I've got it working with both Google and Twitter's

OAuth implementations. However, it seems to fail to sign the URL at

random. In other words, it works 1 out of 3 times.

...

Any idea why this could be happening?

I received a response with a cleaner makeSignedRequest() function. I tried it and, unfortunately, it seems to be equally unreliable. I suspect the problem is with the OAuth JavaScript implementation, GWT's interpretation of it, or that OAuth isn't as mature as it appears to be. I'd like to think one of the first two causes the problem.

To make it easier to create a robust example of GWT and OAuth, I created a gwt-oauth project you can download or view online. Please keep in mind the demo is likely to be flakey. If you're persistent and try enough times, it's likely to work. Firefox seems to succeed moreso than Safari or Chrome. If you have any suggestions for improving this example, please let me know.